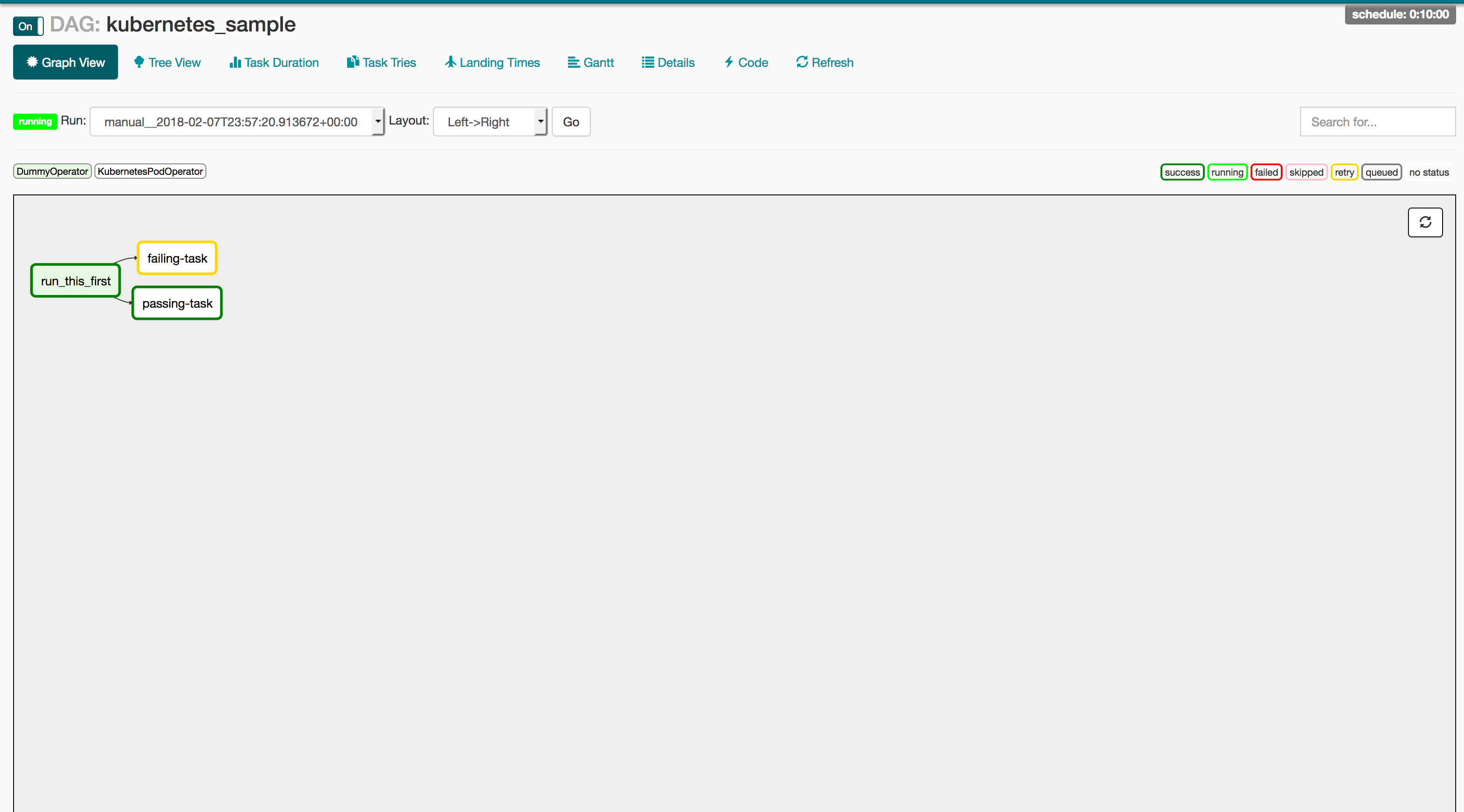

Since they receive the parameters from an external source, will they keep the same parameters when they will be reprocessed? To check that, I cleaned the state of one of the executions of hello_world_a. So, if you have some problems in your logic and restart the pipeline, you won't see already processed messages again - unless you will never retry the router tasks and only reprocess triggered DAGs which in this context could be an acceptable trade-off.Īnother point to analyze related to replayability concerns externally triggered DAGs. First, our "router" DAG is not idempotent - the input always changes because of non-deterministic character of RabbitMQ queue. That's why I will also try the solution with an external API call.Īside from the scalability, there are some logical problems with this solution. Hence, if you want to trigger the DAG in the response of the given event as soon as it happens, you may be a little bit deceived. This section provides an overview of the API design, methods. It works but as you can imagine, the frequency of publishing messages is much higher than consuming them. To facilitate management, Apache Airflow supports a range of REST API endpoints across its objects. In the following image you can see how the routing DAG behaved after executing the code: Python_callable=trigger_dag_with_context, You can find an example in the following snippet that I will use later in the demo code: In order to enable this feature, you must set the trigger property of your DAG to None. But it can also be executed only on demand. External triggerĪpache Airflow DAG can be triggered at regular interval, with a classical CRON expression. The second one provides a code that will trigger the jobs based on a queue external to the orchestration framework. From reading a several posts here: Airflow S3KeySensor - How to make it continue running and Airflow s3 connection using UI, I think it would best to trigger my Airflow DAG using AWS lambda which will be called as soon as a file lands on the s3 folder. The first describes the external trigger feature in Apache Airflow. If your dag is more complex and depends of specific start and end times then this approach might not work or may need to be extended a little.The post is composed of 3 parts. This assumes your dags are just using typical params like “ ds” etc and so only need the execution_date to run properly. So you can just increment the 00:00:01 part to 00:00:02 if you need to rerun the same backfill again for some reason (like you messed up your “fix” the first time around □ ). You would run it like this: python airflow_trigger_dags.py -dag 'my_beautiful_dag' -start ' 00:00:01' -end ' 00:00:01'įor the dag you pass, it will loop over each day and kick off a dag run for the same timestamp you define.

So here is a little Python script to just loop over a range of days and kick of a dag run for each day.

The “new” REST API helps and mean’s all the building blocks are there but, as I found out today, there can often still be some faffing about left for you to do. It’s 2022 and this is still surprisingly painful with Airflow. By default the experimental API is unsecured, and hence before we continue we should define an authbackend which secures it. In this blog post we will use it to trigger a DAG. You have some dag that runs multiple times a day but you need to do a manual backfill of last 30 days. The experimental API allows you to fetch information regarding dags and tasks, but also trigger and even delete a DAG.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed